By Andre Perunicic | December 13, 2017

In this post, I will highlight a few ways to save images while scraping the web through a headless browser. The simplest solution would be to extract the image URLs from the headless browser and then download them separately, but what if that’s not possible? Perhaps the images you need are generated dynamically or you’re visiting a website which only serves images to logged-in users. Maybe you just don’t want to put unnecessary strain on their servers by requesting the image multiple times. Whatever your motivation, there are plenty of options at your disposal.

The techniques covered in this post are roughly split into those that execute JavaScript on the page and those that try to extract a cashed or in-memory version of the image. I will use Puppeteer—a JavaScript browser automation framework that uses the DevTools Protocol API to drive a bundled version of Chromium—but you should be able to achieve similar results with other headless technologies, like Selenium. To make things concrete, I’ll mostly be extracting the Intoli logo rendered as a PNG, JPG, and SVG from this very page.

The dimensions of the first two images are 605 x 605 in pixels, but they appear smaller on the screen because they are placed in <div> elements which restrict their size.

Each of the images has its extension for its id attribute, e.g.,

<img id='jpg' src='img/logo.jpg' />

so that they can easily be selected, e.g. document.querySelector('#jpg').

Introduction to Puppeteer

To get started, install Yarn (unless you prefer a different package manager), create a new project folder, and install Puppeteer:

mkdir image-extraction

cd image-extraction

yarn add puppeteer

The last line will download and configure a copy of Chromium to be used by Puppeteer. Let’s see what a script that visits this page and takes a screenshot of the Intoli logo looks like.

const puppeteer = require('puppeteer');

(async () => {

// Set up browser and page.

const browser = await puppeteer.launch();

const page = await browser.newPage();

page.setViewport({ width: 1280, height: 926 });

// Navigate to this blog post and wait a bit.

await page.goto('https://intoli.com/blog/saving-images/');

await page.waitForSelector('#svg');

// Select the #svg img element and save the screenshot.

const svgImage = await page.$('#svg');

await svgImage.screenshot({

path: 'logo-screenshot.png',

omitBackground: true,

});

await browser.close();

})();

During development, you may wish to start Puppeteer with

puppeteer.launch({headless: false})

and comment out the await browser.close() line in order to see exactly what’s going on and have a chance to manually interact with the page before closing the browser yourself.

On Linux, you may have to install additional packages and pass a few extra options to launch the browser:

const browser = await puppeteer.launch({

headless: false,

args: ['--no-sandbox', '--disable-setuid-sandbox'],

executablePath: 'google-chrome-unstable',

});

If you save the script as get-intoli-logo.js, you can execute it from the project’s base directory with

node get-intoli-logo.js

If everything goes according to plan, this script will have taken a PNG screenshot of the Intoli logo SVG shown above.

The omitBackground: true setting instructs Puppeteer to preserve the SVG’s transparency and you can customize a few other options as well.

Now obviously we’ve just taken a screenshot of the SVG, so the image will necessarily be re-encoded and sized exactly as it is on the page, but this is probably the easiest method to use if that’s good enough for your purposes.

Extracting Images via JavaScript on the Page

Puppeteer’s page.evaluate() method provides an easy way to execute a JavaScript function in the context of the current page and get back its return value.

One strategy for getting images from a webpage is therefore to extract raw image data using JavaScript and then pass it to the backend for saving.

Since Puppeteer serializes return values of evaluated JavaScript functions, however, we have to encode the typically binary image data in a serialization-friendly way.

This can luckily be easily accomplished by using Data URLs, which are simply strings of the form

data:<image format>;base64,<image data>

and can be parsed on with something like

const parseDataUrl = (dataUrl) => {

const matches = dataUrl.match(/^data:(.+);base64,(.+)$/);

if (matches.length !== 3) {

throw new Error('Could not parse data URL.');

}

return { mime: matches[1], buffer: Buffer.from(matches[2], 'base64') };

};

In this section we will explore a few methods for getting DataURLs from images on the page and saving them to the filesystem via Node’s fs module.

Any page JavaScript-based method will unfortunately suffer from CORS-related limitations.

In particular, it is impossible to extract image data downloaded from a different origin using on-page JavaScript.

If this affects you, feel free to skip over this section since later parts of the post will cover ways to get around this problem.

Extract Image from a New Canvas

The first method I’ll talk about creates a blank canvas element, writes the target image onto it, and extracts the image data as a Data URL. If the image data comes from a different origin, this will cause the canvas to become tainted and trying to get the image data out of the canvas will throw an error.

const getDataUrlThroughCanvas = async (selector) => {

// Create a new image element with unconstrained size.

const originalImage = document.querySelector(selector);

const image = document.createElement('img');

image.src = originalImage.src;

// Create a canvas and context to draw onto.

const canvas = document.createElement('canvas');

const context = canvas.getContext('2d');

canvas.width = image.width;

canvas.height = image.height;

// Ensure the image is loaded.

await new Promise((resolve) => {

if (image.complete || (image.width) > 0) resolve();

image.addEventListener('load', () => resolve());

});

context.drawImage(image, 0, 0);

return canvas.toDataURL();

};

By default, the Data URL obtained from getDataUrlThroughCanvas() will be of type image/png, so make sure to either save the extracted image as a .png file or reconfigure the encoder options.

Note also that creating a new image element is an easy way to guarantee the saved image will have the original image’s dimensions.

To save the data locally, add a block like the following to the async body of a script like get-intoli-logo.js.

const fs = require('fs');

try {

const dataUrl = await page.evaluate(getDataUrlThroughCanvas, '#jpg');

const dataUrl = await page.evaluate(getDataUrlThroughCanvas, imageSelector);

const { buffer } = parseDataUrl(dataUrl);

fs.writeFileSync('logo-canvas.png', buffer, 'base64');

} catch (error) {

console.log(error);

}

This will create a PNG copy of the JPEG logo sized at 605 x 605 in pixels.

(Re-)Fetch the Image from the Server

If you aren’t satisfied with a re-encoded copy of the image, you can always just fetch the image.

This doesn’t actually mean the image will be re-transmitted over the network: unless the server doesn’t allow caching or requires re-validation through the Cache-Control header, the image will likely just be grabbed from the cache.

const getDataUrlThroughFetch = async (selector, options = {}) => {

const image = document.querySelector(selector);

const url = image.src;

const response = await fetch(url, options);

if (!response.ok) {

throw new Error(`Could not fetch image, (status ${response.status}`);

}

const data = await response.blob();

const reader = new FileReader();

return new Promise((resolve) => {

reader.addEventListener('loadend', () => resolve(reader.result));

reader.readAsDataURL(data);

});

};

If you absolutely need to avoid extra server traffic, you can use the { cache: 'only-if-cached' } option when calling the method, which will use a (possibly stale) cache match, and otherwise throw an error:

const assert = require('assert');

try {

const options = { cache: 'no-cache' };

const dataUrl = await page.evaluate(getDataUrlThroughFetch, '#svg', options);

const { mime, buffer } = parseDataUrl(dataUrl);

assert.equal(mime, 'image/svg+xml');

fs.writeFileSync('logo-fetch.svg', buffer, 'base64');

} catch (error) {

console.log(error);

}

This will again not work for cross-origin images without headers that explicitly allow cross-origin access. One way to get around this would be to navigate to the URL of the image directly, and then fetch it, similarly to the above. The image will still likely be grabbed from the cache, but you’ll need to navigate back and repeat the process for any other images. You do get the image in its original form, though, which is nice.

Extracting Images Using the DevTools Protocol

While evaluating JavaScript on the page is easy, we’ve seen that it comes with its own set of potentially undesirable limitations. Luckily, we can get around most of them by exploiting the fact that Puppeteer communicates with Chromium through the the DevTools Protocol API. Roughly, the API lets you accomplish anything you could within Chrome’s DevTool’s pane (and more). Calls to the API are pushed through a WebSocket opened by Chromium and consist of a method name and options payload. Each method falls within a particular domain, like “Networking” or “Storage,” and it’s generally pretty easy to figure out what you need to call by searching through the documentation.

Puppeteer doesn’t have a public interface for making arbitrary WebTools Protocol calls, but its private interface is very easy to use: you simply specify the method and options in a call to page._client.send().

For example, let’s take a peek at the resources that are attached to the main frame of this page.

const puppeteer = require('puppeteer') ;

(async () => {

const browser = await puppeteer.launch();

const page = await browser.newPage();

page.setViewport({ width: 1280, height: 926 });

await page.goto('https://intoli.com/blog/saving-images/');

try {

const tree = await page._client.send('Page.getResourceTree');

for (const resource of tree.frameTree.resources) {

console.log(resource);

}

} catch (e) {

console.log(e);

}

await browser.close();

})();

Here, tree is an object of the form

{ frameTree:

{ frame:

{ id: '(57F9E508E015A51878DE5499D7080C43)',

loaderId: '27131.2',

url: 'https://intoli.com/blog/saving-images/',

securityOrigin: 'https://intoli.com',

mimeType: 'text/html' },

childFrames: [ [Object], [Object], [Object] ],

resources:

[ [Object],

[Object],

[Object],

// ...

[Object] ] } }

so calling the above script log-resource-list.js and running it via node log-resource-list.js produces something like the following.

{ url: 'https://intoli.com/blog/saving-images/img/logo.svg',

type: 'Image',

mimeType: 'image/svg+xml',

lastModified: 1512436219,

contentSize: 5267 }

{ url: 'https://intoli.com/css/style.default.css',

type: 'Stylesheet',

mimeType: 'text/css',

lastModified: 1512343332,

contentSize: 75574 }

// ...

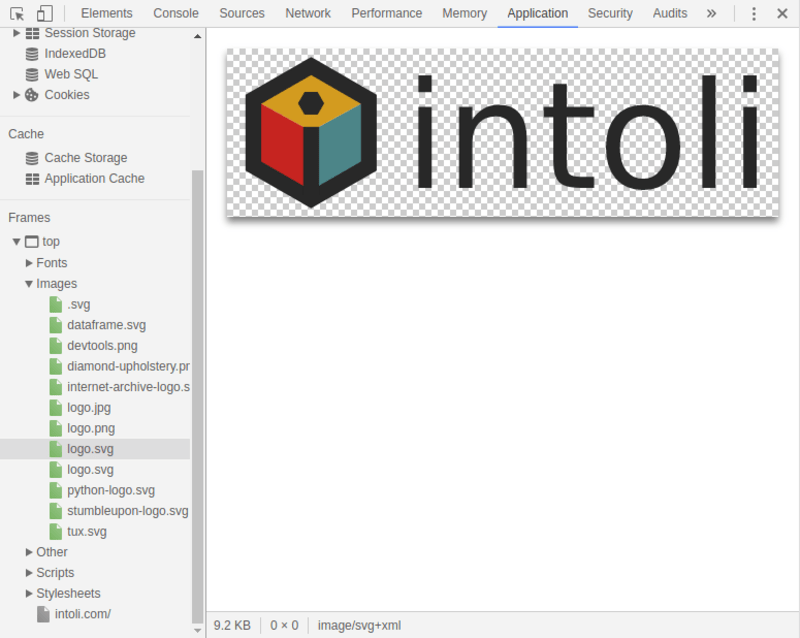

These are just the resources visible in the Frames section of the Application tab of the DevTools panel.

A different call can be used to actually grab some of these resources.

const assert = require('assert');

const getImageContent = async (page, url) => {

const { content, base64Encoded } = await page._client.send(

'Page.getResourceContent',

{ frameId: String(page.mainFrame()._id), url },

);

assert.equal(base64Encoded, true);

return content;

};

From the script running Puppeteer, we would then call

try {

const url = await page.evaluate(() => document.querySelect('#svg').src)

const content = await getImageContent(page, url);

const contentBuffer = Buffer.from(content, 'base64');

fs.writeFileSync('logo-extracted.svg', contentBuffer, 'base64');

} catch (e) {

console.log(e);

}

which would download the SVG logo to logo-extracted.svg.

A brief aside: if you take a look at the data being transmitted to Chromium by Puppeteer when getImageContent() is called, something like this appears:

{ id: 17,

method: 'Target.sendMessageToTarget',

params:

{ sessionId: '(57F9E508E015A51878DE5499D7080C43):1',

message: '{"id":14,"method":"Page.getResourceContent","params":{"frameId":"(57F9E508E015A51878DE5499D7080C43)","url":"https://intoli.com/blog/saving-images/img/logo.svg"}}' } }

That’s because page._client.send() helpfully wraps up the call so that it’s associated with the page you’re on.

If you want to “directly” communicate with the underlying Chrome instance, use page._connection.send() and explore the source for lib/Connection.js if you’re stuck.

Saving All Images

If you want to save all images you encounter and sort through them later, that’s also possible with Puppeteer. In particular, intercepting requests and doing special things with responses as they’re coming in is very easy because there is an official way of doing it. For example, the following script saves all images as they’re downloaded.

const fs = require('fs');

const puppeteer = require('puppeteer');

(async () => {

const browser = await puppeteer.launch();

const page = await browser.newPage();

page.setViewport({ width: 1280, height: 926 });

let counter = 0;

page.on('response', async (response) => {

const matches = /.*\.(jpg|png|svg|gif)$/.exec(response.url());

if (matches && (matches.length === 2)) {

const extension = matches[1];

const buffer = await response.buffer();

fs.writeFileSync(`images/image-${counter}.${extension}`, buffer, 'base64');

counter += 1;

}

});

await page.goto('https://intoli.com/blog/saving-images/');

await page.waitFor(10000);

await browser.close();

})();

Running this places all the target images into the images/ subdirectory.

More granular selection using targeted images on the page can be achieved with a Promise-based system, and is left as an exercise for the reader.

Conclusion

We saw how to extract images by evaluating JavaScript on the page, and the limitations therein. We also explored a bit of the DevTools Protocol API and how Puppeteer uses it. Basically, to get some images out of the browser: spin up Puppeteer, choose the technique that best suits you, and Bob’s your uncle.

Even if extracting images isn’t your immediate goal (and you made it this far!), I hope that you enjoyed learning about how to take first steps with Puppeteer. Browser automation is extremely exciting right now, and we are always taking advantage of the latest available tech here at Intoli. If you’re looking for help on an automation project, don’t hesitate to get in touch. Leave your thoughts and comments below, and if you enjoyed this post consider subscribing to our mailing list!

Suggested Articles

If you enjoyed this article, then you might also enjoy these related ones.

Performing Efficient Broad Crawls with the AOPIC Algorithm

Learn how to estimate page importance and allocate bandwidth during a broad crawl.

Breaking Out of the Chrome/WebExtension Sandbox

A short guide to breaking out of the WebExtension content script sandbox.

User-Agents — Generating random user agents using Google Analytics and CircleCI

A free dataset and JavaScript library for generating random user agents that are always current.

Comments